🎉 打造专属个人语音AI助手,掌控所有应用⚡

厌倦了构建只会聊天、千篇一律的文本聊天机器人吗?🥱

是啊,我也是。

如果只需与人工智能对话,就能让它控制 Gmail、Notion、Google Sheets 或你使用的任何其他应用程序,而无需触碰键盘,那该多好?

如果你觉得这听起来像是你想做的事情,那就坚持看到最后。这会很有趣。

让我们一步一步地把它全部搭建起来。✌️

涵盖哪些内容?

在本教程中,您将学习如何使用 Composio 构建自己的语音 AI 代理。它就像将 ChatGPT 和所有工具集中在一个地方,您可以使用语音(以及根据需要使用聊天)来控制它!

你将学到: ✨

- 如何在 Next.js 中使用语音识别

- 如何使用 Composio 增强您的语音 AI 代理

- 最重要的是,如何编写代码才能使所有功能达到生产就绪状态。

想知道效果如何吗?来看看我如何将 Gmail 和 Google Sheets 结合使用的一个快速演示吧!👇

项目设置👷

初始化 Next.js 应用程序

🙋♂️ 在本节中,我们将完成构建项目所需的所有先决条件。

使用以下命令初始化一个新的 Next.js 应用程序:

ℹ️ 你可以使用任何你喜欢的包管理器。在这个项目中,我将使用 npm。

npx create-next-app@latest voice-chat-ai-configurable-agent \

--typescript --tailwind --eslint --app --src-dir --use-npm

接下来,进入新创建的 Next.js 项目:

cd voice-chat-ai-configurable-agent

安装依赖项

我们需要一些依赖项。运行以下命令安装它们:

npm i composio-core zustand @langchain/core @langchain/openai \

framer-motion openai react-speech-recognition use-debounce

它们用于以下用途:

- composio-core:将工具集成到代理中

- zustand:一个简单的状态管理库

- openai:提供人工智能驱动的响应

- framer-motion:为用户界面添加流畅的动画效果

- react-speech-recognition:启用语音识别

- use-debounce:为语音输入添加防抖功能

配置 Composio

ℹ️ 我们将使用 Composio 为我们的应用程序添加集成。您可以选择任何您喜欢的集成,但请务必先进行身份验证。

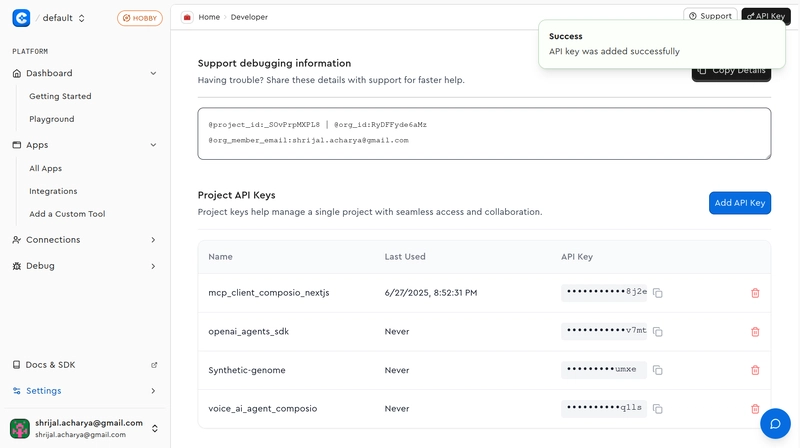

首先,在继续操作之前,您需要获取 Composio API 密钥。

请前往 Composio 创建一个帐户,获取您的 API 密钥,并将其粘贴到.env项目根目录下的文件中。

COMPOSIO_API_KEY=<your_composio_api_key>

现在,您需要安装composioCLI 应用程序,可以使用以下命令进行安装:

sudo npm i -g composio-core

使用以下命令登录 Composio:

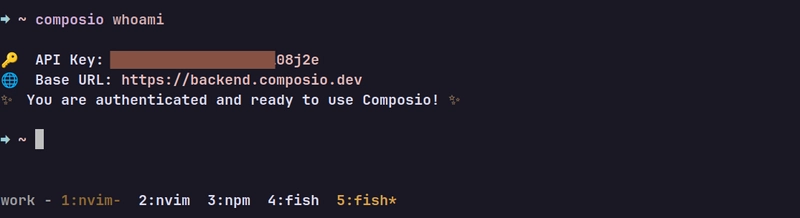

composio login

完成上述步骤后,运行该composio whoami命令,如果您看到类似以下示例的内容,则表示您已成功登录。

现在,您可以自行决定支持哪些集成。不妨添加一些集成吧。

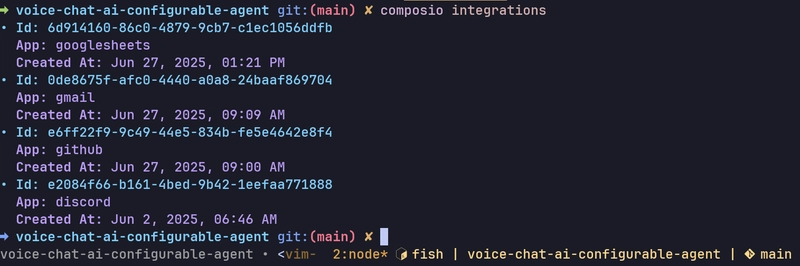

按照终端中的说明运行以下命令来设置集成:

composio add <integration_name>

要查看所有可用选项的列表,请点击此处。

添加集成后,运行composio integrations命令,您应该会看到类似这样的内容:

我给自己添加了一些工具,现在应用程序可以轻松连接并使用我们已验证的所有工具了。🎉

安装和设置 Shadcn/UI

Shadcn/UI 内置了许多现成的 UI 组件,因此我们将在本项目中使用它。运行以下命令使用默认设置对其进行初始化:

npx shadcn@latest init -d

我们需要一些用户界面组件,但这个项目不会把太多精力放在用户界面上。我们会保持简洁,主要精力集中在逻辑部分。

npx shadcn@latest add button dialog input label separator

components/ui这应该会在名为button.tsx、dialog.tsx、input.tsx、label.tsx和 的目录中添加五个不同的文件separator.tsx。

代码实现

🙋♂️ 在本节中,我们将介绍创建聊天界面、使用语音识别以及将其与 Composio 工具连接所需的所有编码。

添加辅助函数

在编写项目逻辑代码之前,让我们先编写一些在整个项目中会用到的辅助函数和常量。

我们先来设置一些常量。constants.ts在项目根目录下创建一个名为 `.ts` 的新文件,并添加以下代码:

export const CONFIG = {

SPEECH_DEBOUNCE_MS: 1500,

MAX_TOOL_ITERATIONS: 10,

OPENAI_MODEL: "gpt-4o-mini",

TTS_MODEL: "tts-1",

TTS_VOICE: "echo" as const,

} as const;

export const SYSTEM_MESSAGES = {

INTENT_CLASSIFICATION: `You are an intent classification expert. Your job is to

determine if a user's request requires executing an action with a tool (like

sending an email, fetching data, creating a task) or if it's a general

conversational question (like 'hello', 'what is the capital of France?').

- If it's an action, classify as 'TOOL_USE'.

- If it's a general question or greeting, classify as 'GENERAL_CHAT'.`,

APP_IDENTIFICATION: (availableApps: string[]) =>

`You are an expert at identifying which software

applications a user wants to interact with. Given a list of available

applications, determine which ones are relevant to the user's request.

Available applications: ${availableApps.join(", ")}`,

ALIAS_MATCHING: (aliasNames: string[]) =>

`You are a smart assistant that identifies relevant

parameters. Based on the user's message, identify which of the available

aliases are being referred to. Only return the names of the aliases that are

relevant.

Available alias names: ${aliasNames.join(", ")}`,

TOOL_EXECUTION: `You are a powerful and helpful AI assistant. Your goal is to use the

provided tools to fulfill the user's request completely. You can use multiple

tools in sequence if needed. Once you have finished, provide a clear, concise

summary of what you accomplished.`,

SUMMARY_GENERATION: `You are a helpful assistant. Your task is to create a brief, friendly,

and conversational summary of the actions that were just completed for the

user. Focus on what was accomplished. Start with a friendly confirmation like

'All set!', 'Done!', or 'Okay!'.`,

} as const;

这些是我们将会在全文中使用的一些常量,你可能想知道我们在这些常量中传递的别名是什么。

简而言之,这些是用于存储键值对的别名。例如,在使用 Discord 时,您可能会有一个名为“游戏频道 ID”的别名,用于存储您的游戏频道 ID。

我们使用这些别名是因为用语音说出ID、邮箱地址等信息不太方便。所以,您可以设置这些别名以便轻松引用它们。

🗣️ 说“你能总结一下我的游戏频道最近的聊天记录吗?”,它将使用相关的别名传递给 LLM,LLM 又会使用相关的字段调用 Composio API。

如果你现在感到困惑,别担心。跟着做,你很快就会明白这一切是怎么回事。

现在,我们来设置用于存储所有别名的存储环境。在目录中localStorage创建一个名为 `.yml` 的新文件,并添加以下代码:alias-store.tslib

// 👇 voice-chat-ai-configurable-agent/lib/alias-store.ts

import { create } from "zustand";

import { persist, createJSONStorage } from "zustand/middleware";

export interface Alias {

name: string;

value: string;

}

export interface IntegrationAliases {

[integrationName: string]: Alias[];

}

interface AliasState {

aliases: IntegrationAliases;

addAlias: (integration: string, alias: Alias) => void;

removeAlias: (integration: string, aliasName: string) => void;

editAlias: (

integration: string,

oldAliasName: string,

newAlias: Alias,

) => void;

}

export const useAliasStore = create<AliasState>()(

persist(

(set) => ({

aliases: {},

addAlias: (integration, alias) =>

set((state) => ({

aliases: {

...state.aliases,

[integration]: [...(state.aliases[integration] || []), alias],

},

})),

removeAlias: (integration, aliasName) =>

set((state) => ({

aliases: {

...state.aliases,

[integration]: state.aliases[integration].filter(

(a) => a.name !== aliasName,

),

},

})),

editAlias: (integration, oldAliasName, newAlias) =>

set((state) => ({

aliases: {

...state.aliases,

[integration]: state.aliases[integration].map((a) =>

a.name === oldAliasName ? newAlias : a,

),

},

})),

}),

{

name: "voice-agent-aliases-storage",

storage: createJSONStorage(() => localStorage),

},

),

);

如果您之前使用过 Zustand,那么这种设置应该会让您感到熟悉。如果没有,这里做一个简单的说明:我们有一个Alias用于存储键值对的类型,以及一个AliasState表示完整别名状态的接口,还有用于添加、编辑或删除别名的函数。

每个别名都归类在一个集成名称(例如“Slack”或“Discord”)下,方便按服务进行管理。这些别名存储在一个aliases对象中,该对象使用特定IntegrationAliases类型,将集成名称映射到别名数组。

我们使用persist中间件来持久化别名,这样在重新加载页面时别名就不会丢失,这将非常有用。

使用该功能createJSONStorage可确保状态被序列化并存储在localStorage键“voice-agent-aliases-storage”下。

ℹ️ 在本教程中,我尽量简化了操作,并将其存储在 中

localStorage,这样应该就可以了,但如果您有兴趣,您甚至可以将其设置并存储在数据库中。

现在,让我们添加另一个辅助函数,该函数将根据应用程序抛出的错误返回正确的错误消息和状态代码。

error-handler.ts在目录中创建一个名为 `.txt` 的新文件lib,并添加以下代码行:

// 👇 voice-chat-ai-configurable-agent/lib/error-handler.ts

export class AppError extends Error {

constructor(

message: string,

public statusCode: number = 500,

public code?: string,

) {

super(message);

this.name = "AppError";

}

}

export function handleApiError(error: unknown): {

message: string;

statusCode: number;

} {

if (error instanceof AppError) {

return {

message: error.message,

statusCode: error.statusCode,

};

}

if (error instanceof Error) {

return {

message: error.message,

statusCode: 500,

};

}

return {

message: "An unexpected error occurred",

statusCode: 500,

};

}

我们定义了一个名为 `Error` 的自定义错误类,AppError它继承自内置的 ` ErrorError` 类。目前我们的程序中还不会用到它,因为我们不需要在任何 API 端点抛出错误。

但是,如果您需要扩展应用程序的功能并抛出错误,则可以使用它。

handleApiError非常简单;它接收错误信息,并根据错误类型返回错误消息和状态码。

最后,让我们设置辅助函数并编写一个 zod 验证器来验证用户输入。

message-validator.ts在目录中创建一个名为 `.txt` 的新文件lib,并添加以下代码行:

// 👇 voice-chat-ai-configurable-agent/lib/message-validator.ts

import { z } from "zod";

export const messageSchema = z.object({

message: z.string(),

aliases: z.record(

z.array(

z.object({

name: z.string(),

value: z.string(),

}),

),

),

});

export type TMessageSchema = z.infer<typeof messageSchema>;

messageZod 模式使用字符串和记录来验证对象aliases,其中每个键都映射到字符串对数组{ name, value }。

看起来大概是这样的:

{

message: "Summarize the recent chats in my gaming channel",

aliases: {

slack: [

{ name: "office channel", value: "#office" },

],

discord: [

{ name: "gaming channel id", value: "123456789" }

],

// some others if you have them...

}

}

我们的想法是,对于发送的每条消息,我们都会同时发送所有已设置的别名。然后,我们将使用 LLM 来确定处理用户查询需要哪些别名。

现在辅助函数已经编写完毕,让我们开始编写应用程序的主要逻辑吧。🎉

创建自定义钩子

我们将创建一些钩子,用于处理音频、语音识别等相关功能。

hooks在项目根目录下创建一个名为 `<directory_name>` 的新目录,并在其中创建一个名为 `<file_name>` 的新文件use-speech-recognition.ts,然后添加以下代码行:

// 👇 voice-chat-ai-configurable-agent/hooks/use-speech-recognition.ts

import { useEffect, useRef } from "react";

import { useDebounce } from "use-debounce";

import SpeechRecognition, {

useSpeechRecognition,

} from "react-speech-recognition";

import { CONFIG } from "@/lib/constants";

interface UseSpeechRecognitionWithDebounceProps {

onTranscriptComplete: (transcript: string) => void;

debounceMs?: number;

}

export const useSpeechRecognitionWithDebounce = ({

onTranscriptComplete,

debounceMs = CONFIG.SPEECH_DEBOUNCE_MS,

}: UseSpeechRecognitionWithDebounceProps) => {

const {

transcript,

listening,

resetTranscript,

browserSupportsSpeechRecognition,

} = useSpeechRecognition();

const [debouncedTranscript] = useDebounce(transcript, debounceMs);

const lastProcessedTranscript = useRef<string>("");

useEffect(() => {

if (

debouncedTranscript &&

debouncedTranscript !== lastProcessedTranscript.current &&

listening

) {

lastProcessedTranscript.current = debouncedTranscript;

SpeechRecognition.stopListening();

onTranscriptComplete(debouncedTranscript);

resetTranscript();

}

}, [debouncedTranscript, listening, onTranscriptComplete, resetTranscript]);

const startListening = () => {

resetTranscript();

lastProcessedTranscript.current = "";

SpeechRecognition.startListening({ continuous: true });

};

const stopListening = () => {

SpeechRecognition.stopListening();

};

return {

transcript,

listening,

resetTranscript,

browserSupportsSpeechRecognition,

startListening,

stopListening,

};

};

我们使用react-speech-recognition语音输入来处理语音输入,并在此基础上添加了防抖功能,这样就不会因为每次细微的变化而触发操作。

基本上,每当转录文本一段时间内不再变化(debounceMs),并且与我们处理的最后一个转录文本不同时,我们就会停止监听,调用onTranscriptComplete,并重置转录文本。

startListening清除旧数据并以连续模式启动语音识别。然后stopListening……就停止了。🥴

就是这样。这是一个简单的钩子函数,用于管理语音输入,通过防抖来确保它不会在我们停止说话后立即提交,并增加一些延迟。

现在我们已经了解了如何处理语音输入,接下来让我们处理音频。创建一个名为 `.json` 的新文件use-audio.ts,并添加以下代码:

// 👇 voice-chat-ai-configurable-agent/hooks/use-audio.ts

import { useCallback, useRef, useState } from "react";

export const useAudio = () => {

const [isPlaying, setIsPlaying] = useState<boolean>(false);

const currentSourceRef = useRef<AudioBufferSourceNode | null>(null);

const audioContextRef = useRef<AudioContext | null>(null);

const stopAudio = useCallback(() => {

if (currentSourceRef.current) {

try {

currentSourceRef.current.stop();

} catch (error) {

console.error("Error stopping audio:", error);

}

currentSourceRef.current = null;

}

setIsPlaying(false);

}, []);

const playAudio = useCallback(

async (text: string) => {

try {

stopAudio();

const response = await fetch("/api/tts", {

method: "POST",

headers: { "Content-Type": "application/json" },

body: JSON.stringify({ text }),

});

if (!response.ok) throw new Error("Failed to generate audio");

const AudioContext =

// eslint-disable-next-line @typescript-eslint/no-explicit-any

window.AudioContext || (window as any).webkitAudioContext;

const audioContext = new AudioContext();

audioContextRef.current = audioContext;

const audioData = await response.arrayBuffer();

const audioBuffer = await audioContext.decodeAudioData(audioData);

const source = audioContext.createBufferSource();

currentSourceRef.current = source;

source.buffer = audioBuffer;

source.connect(audioContext.destination);

setIsPlaying(true);

source.onended = () => {

setIsPlaying(false);

currentSourceRef.current = null;

};

source.start(0);

} catch (error) {

console.error("Error playing audio:", error);

setIsPlaying(false);

currentSourceRef.current = null;

}

},

[stopAudio],

);

return { playAudio, stopAudio, isPlaying };

};

它的功能很简单:使用 Web Audio API 播放或停止音频。我们将用它来处理 OpenAI 的 TTS 生成的语音的音频播放。

该playAudio函数接收用户输入(文本),将其发送到 API 端点(/api/tts),获取音频响应,解码音频,并在浏览器中播放。它AudioContext底层使用并管理状态,例如音频当前是否正在播放isPlaying。我们还提供了一个stopAudio函数,以便在需要时提前停止播放。

我们尚未实施该/api/tts路线,但很快就会实施。

现在,我们来实现另一个用于处理聊天信息的钩子。基本上,我们将用它来处理所有消息。

// 👇 voice-chat-ai-configurable-agent/hooks/use-chat.ts

import { useState, useCallback } from "react";

import { useAliasStore } from "@/lib/alias-store";

export interface Message {

id: string;

role: "user" | "assistant";

content: string;

}

export const useChat = () => {

const [messages, setMessages] = useState<Message[]>([]);

const [isLoading, setIsLoading] = useState<boolean>(false);

const { aliases } = useAliasStore();

const sendMessage = useCallback(

async (text: string) => {

if (!text.trim() || isLoading) return null;

const userMessage: Message = {

id: Date.now().toString(),

role: "user",

content: text,

};

setMessages((prev) => [...prev, userMessage]);

setIsLoading(true);

try {

const response = await fetch("/api/chat", {

method: "POST",

headers: { "content-type": "application/json" },

body: JSON.stringify({ message: text, aliases }),

});

if (!response.ok) throw new Error("Failed to generate response");

const result = await response.json();

const botMessage: Message = {

id: (Date.now() + 1).toString(),

role: "assistant",

content: result.content,

};

setMessages((prev) => [...prev, botMessage]);

return botMessage;

} catch (err) {

console.error("Error generating response:", err);

const errorMessage: Message = {

id: (Date.now() + 1).toString(),

role: "assistant",

content: "Error generating response",

};

setMessages((prev) => [...prev, errorMessage]);

return errorMessage;

} finally {

setIsLoading(false);

}

},

[aliases, isLoading],

);

return {

messages,

isLoading,

sendMessage,

};

};

这个很简单。我们用它useChat来管理简单的聊天流程——它可以跟踪所有消息以及我们当前是否正在等待回复。

调用 sendMessage 时,它会将用户的输入添加到聊天中,然后使用/api/chat消息及其别名访问我们的路由,最后用助手回复的内容更新消息。如果失败,我们会使用备用错误消息代替。就是这样。

钩子部分基本完成了。既然我们已经把所有这些都模块化成钩子了,为什么不再创建一个用于组件挂载检查的小型辅助钩子呢?

创建一个名为 `.txt` 的新文件use-mounted.ts,并添加以下代码行:

// 👇 voice-chat-ai-configurable-agent/hooks/use-mounted.ts

import { useEffect, useState } from "react";

export const useMounted = () => {

const [hasMounted, setHasMounted] = useState<boolean>(false);

useEffect(() => {

setHasMounted(true);

}, []);

return hasMounted;

};

这是一个用于检查组件是否已在客户端挂载的小钩子。它会true在首次渲染后返回结果,方便跳过服务器端渲染(SSR)相关的操作。

最后,在完成了四个钩子函数的设置之后,钩子函数的配置就完成了。接下来,让我们开始构建 API。

构建 API 逻辑

太好了,现在看来,先着手开发 API 部分,然后再进行 UI 开发就合情合理了。

进入app目录并创建两条不同的路由:

mkdir -p app/api/tts && mkdir app/api/chat

很好,现在我们来实现路由。在目录中/tts创建一个名为 `.route.js` 的新文件,并添加以下代码:route.tsapi/tts

// 👇 voice-chat-ai-configurable-agent/app/api/tts/route.ts

import { NextRequest, NextResponse } from "next/server";

import OpenAI from "openai";

import { CONFIG } from "@/lib/constants";

import { handleApiError } from "@/lib/error-handler";

const OPENAI_API_KEY = process.env.OPENAI_API_KEY;

if (!OPENAI_API_KEY) {

throw new Error("OPENAI_API_KEY environment variable is not set");

}

const openai = new OpenAI({

apiKey: OPENAI_API_KEY,

});

export async function POST(req: NextRequest) {

try {

const { text } = await req.json();

if (!text) return new NextResponse("Text is required", { status: 400 });

const mp3 = await openai.audio.speech.create({

model: CONFIG.TTS_MODEL,

voice: CONFIG.TTS_VOICE,

input: text,

});

const buffer = Buffer.from(await mp3.arrayBuffer());

return new NextResponse(buffer, {

headers: {

"content-type": "audio/mpeg",

},

});

} catch (error) {

console.error("API /tts", error);

const { statusCode } = handleApiError(error);

return new NextResponse("Error generating response audio", {

status: statusCode,

});

}

}

这是我们/api/tts接收文本并使用 OpenAI 的 TTS API 生成 MP3 音频的路由。我们从请求体中获取文本,使用我们预先设置的模型和语音调用 OpenAI CONFIG,并获取可流式传输的 MP3 音频文件。

重要的是 OpenAI 返回的是一个arrayBuffer,所以我们首先将其转换为 Node,Buffer然后再将响应发送回客户端。

很好,现在到了我们应用程序的主要逻辑,即实际识别用户是否正在请求工具调用,如果是,则查找任何相关的别名,如果不是,则生成通用响应。

route.ts在目录中创建一个名为 `.txt` 的新文件api/chat,并添加以下代码行:

// 👇 voice-chat-ai-configurable-agent/app/api/chat/route.ts

import { NextRequest, NextResponse } from "next/server";

import { z } from "zod";

import { OpenAIToolSet } from "composio-core";

import { Alias } from "@/lib/alias-store";

import {

SystemMessage,

HumanMessage,

ToolMessage,

BaseMessage,

} from "@langchain/core/messages";

import { ChatOpenAI } from "@langchain/openai";

import { messageSchema } from "@/lib/validators/message";

import { ChatCompletionMessageToolCall } from "openai/resources/chat/completions.mjs";

import { v4 as uuidv4 } from "uuid";

import { CONFIG, SYSTEM_MESSAGES } from "@/lib/constants";

import { handleApiError } from "@/lib/error-handler";

const OPENAI_API_KEY = process.env.OPENAI_API_KEY;

const COMPOSIO_API_KEY = process.env.COMPOSIO_API_KEY;

if (!OPENAI_API_KEY) {

throw new Error("OPENAI_API_KEY environment variable is not set");

}

if (!COMPOSIO_API_KEY) {

throw new Error("COMPOSIO_API_KEY environment variable is not set");

}

const llm = new ChatOpenAI({

model: CONFIG.OPENAI_MODEL,

apiKey: OPENAI_API_KEY,

temperature: 0,

});

const toolset = new OpenAIToolSet({ apiKey: COMPOSIO_API_KEY });

export async function POST(req: NextRequest) {

try {

const body = await req.json();

const parsed = messageSchema.safeParse(body);

if (!parsed.success) {

return NextResponse.json(

{

error: parsed.error.message,

},

{ status: 400 },

);

}

const { message, aliases } = parsed.data;

const isToolUseNeeded = await checkToolUseIntent(message);

if (!isToolUseNeeded) {

console.log("handling as a general chat");

const chatResponse = await llm.invoke([new HumanMessage(message)]);

return NextResponse.json({

content: chatResponse.text,

});

}

console.log("Handling as a tool-use request.");

const availableApps = Object.keys(aliases);

if (availableApps.length === 0) {

return NextResponse.json({

content: `I can't perform any actions yet. Please add some integration

parameters in the settings first.`,

});

}

const targetApps = await identifyTargetApps(message, availableApps);

if (targetApps.length === 0) {

return NextResponse.json({

content: `I can't perform any actions yet. Please add some integration

parameters in the settings first.`,

});

}

console.log("Identified target apps:", targetApps);

for (const app of targetApps) {

if (!aliases[app] || aliases[app].length === 0) {

console.warn(

`User mentioned app '${app}' but no aliases are configured.`,

);

return NextResponse.json({

content: `To work with ${app}, you first need to add its required

parameters (like a channel ID or URL) in the settings.`,

});

}

}

const aliasesForTargetApps = targetApps.flatMap(

(app) => aliases[app] || [],

);

const relevantAliases = await findRelevantAliases(

message,

aliasesForTargetApps,

);

let contextualizedMessage = message;

if (relevantAliases.length > 0) {

const contextBlock = relevantAliases

.map((alias) => `${alias.name} = ${alias.value}`)

.join("\n");

contextualizedMessage += `\n\n--- Relevant Parameters ---\n${contextBlock}`;

console.log("Contextualized message:", contextualizedMessage);

}

const finalResponse = await executeToolCallingLogic(

contextualizedMessage,

targetApps,

);

return NextResponse.json({ content: finalResponse });

} catch (error) {

console.error("API /chat", error);

const { message, statusCode } = handleApiError(error);

return NextResponse.json(

{ content: `Sorry, I encountered an error: ${message}` },

{ status: statusCode },

);

}

}

async function checkToolUseIntent(message: string): Promise<boolean> {

const intentSchema = z.object({

intent: z

.enum(["TOOL_USE", "GENERAL_CHAT"])

.describe("Classify the user's intent."),

});

const structuredLlm = llm.withStructuredOutput(intentSchema);

const result = await structuredLlm.invoke([

new SystemMessage(SYSTEM_MESSAGES.INTENT_CLASSIFICATION),

new HumanMessage(message),

]);

return result.intent === "TOOL_USE";

}

async function identifyTargetApps(

message: string,

availableApps: string[],

): Promise<string[]> {

const structuredLlm = llm.withStructuredOutput(

z.object({

apps: z.array(z.string()).describe(

`A list of application names mentioned or implied in the user's

message, from the available apps list.`,

),

}),

);

const result = await structuredLlm.invoke([

new SystemMessage(SYSTEM_MESSAGES.APP_IDENTIFICATION(availableApps)),

new HumanMessage(message),

]);

return result.apps.filter((app) => availableApps.includes(app.toUpperCase()));

}

async function findRelevantAliases(

message: string,

aliasesToSearch: Alias[],

): Promise<Alias[]> {

if (aliasesToSearch.length === 0) return [];

const aliasNames = aliasesToSearch.map((alias) => alias.name);

const structuredLlm = llm.withStructuredOutput(

z.object({

relevantAliasNames: z.array(z.string()).describe(

`An array of alias names that are directly mentioned or semantically

related to the user's message.`,

),

}),

);

try {

const result = await structuredLlm.invoke([

new SystemMessage(SYSTEM_MESSAGES.ALIAS_MATCHING(aliasNames)),

new HumanMessage(message),

]);

return aliasesToSearch.filter((alias) =>

result.relevantAliasNames.includes(alias.name),

);

} catch (error) {

console.error("Failed to find relevant aliases:", error);

return [];

}

}

async function executeToolCallingLogic(

contextualizedMessage: string,

targetApps: string[],

): Promise<string> {

const composioAppNames = targetApps.map((app) => app.toUpperCase());

console.log(

`Fetching Composio tools for apps: ${composioAppNames.join(", ")}...`,

);

const tools = await toolset.getTools({ apps: [...composioAppNames] });

if (!tools || tools.length === 0) {

console.warn("No tools found from Composio for the specified apps.");

return `I couldn't find any actions for ${targetApps.join(" and ")}. Please

check your Composio connections.`;

}

console.log(`Fetched ${tools.length} tools from Composio.`);

const conversationHistory: BaseMessage[] = [

new SystemMessage(SYSTEM_MESSAGES.TOOL_EXECUTION),

new HumanMessage(contextualizedMessage),

];

const maxIterations = CONFIG.MAX_TOOL_ITERATIONS;

for (let i = 0; i < maxIterations; i++) {

console.log(`Iteration ${i + 1}: Calling LLM with ${tools.length} tools.`);

const llmResponse = await llm.invoke(conversationHistory, { tools });

conversationHistory.push(llmResponse);

const toolCalls = llmResponse.tool_calls;

if (!toolCalls || toolCalls.length === 0) {

console.log("No tool calls found in LLM response.");

return llmResponse.text;

}

// totalToolsUsed += toolCalls.length;

const toolOutputs: ToolMessage[] = [];

for (const toolCall of toolCalls) {

const composioToolCall: ChatCompletionMessageToolCall = {

id: toolCall.id || uuidv4(),

type: "function",

function: {

name: toolCall.name,

arguments: JSON.stringify(toolCall.args),

},

};

try {

const executionResult = await toolset.executeToolCall(composioToolCall);

toolOutputs.push(

new ToolMessage({

content: executionResult,

tool_call_id: toolCall.id!,

}),

);

} catch (error) {

toolOutputs.push(

new ToolMessage({

content: `Error executing tool: ${error instanceof Error ? error.message : String(error)}`,

tool_call_id: toolCall.id!,

}),

);

}

}

conversationHistory.push(...toolOutputs);

}

console.log("Generating final summary...");

const summaryResponse = await llm.invoke([

new SystemMessage(SYSTEM_MESSAGES.SUMMARY_GENERATION),

new HumanMessage(

`Based on this conversation history, provide a summary of what was done.

The user's original request is in the first HumanMessage.\n\nConversation

History:\n${JSON.stringify(conversationHistory.slice(0, 4), null, 2)}...`,

),

]);

return summaryResponse.text;

}

首先,我们解析请求体并使用 Zod( messageSchema) 进行验证。如果验证通过,我们检查消息是否需要使用工具checkToolUseIntent()。如果不需要,则说明只是普通的聊天消息,我们将消息传递给 LLM( llm.invoke) 并返回响应。

如果需要使用工具,我们会从用户保存的应用列表中提取可用的应用aliases,然后尝试找出消息中实际引用的应用identifyTargetApps()。

一旦我们知道哪些应用正在运行,我们就筛选出这些应用的别名,并将它们发送出去findRelevantAliases()。这再次利用 LLM 根据消息内容猜测哪些别名是相关的。如果找到任何相关的别名,我们就将它们作为上下文块添加到消息中,--- Relevant Parameters ---以便 LLM 知道它正在处理什么。接下来,繁重的工作由完成executeToolCallingLogic()。这就是关键所在。我们:

- 从 Composio 获取所选应用程序所需的工具,

- 先简单聊聊历史,

- 调用 LLM 并检查它是否想使用任何工具(通过

tool_calls), - 执行每个工具的操作,

- 并将结果反馈到讨论中。

我们不断循环执行此操作(最大N次数),最后要求 LLM 对刚刚发生的事情进行清晰的总结。

基本上就是这样。长话短说,就是:

💡 “我们需要工具吗?不需要?那就聊天。需要?查找应用 → 匹配别名 → 调用工具 → 汇总。”

基本就是这样。这就是我们应用程序的核心。如果你理解了这一点,现在剩下的就是使用用户界面了。

如果你跟着步骤操作,不妨自己尝试搭建一个。如果还没学会,那我们继续!

与用户界面集成

我们先从用户分配别名的模型开始,就像我们之前讨论的那样。我们将使用它aliasStore来访问别名以及所有用于添加、编辑和删除别名的函数。

这很容易理解,因为我们已经完成了逻辑部分;这只是将我们已经完成的所有逻辑附加到用户界面上。

settings-modal.tsx在目录中创建一个名为 `.txt` 的新文件components,并添加以下代码行:

// 👇 voice-chat-ai-configurable-agent/components/settings-modal.tsx

"use client";

import { Button } from "@/components/ui/button";

import {

Dialog,

DialogClose,

DialogContent,

DialogDescription,

DialogFooter,

DialogHeader,

DialogTitle,

DialogTrigger,

} from "@/components/ui/dialog";

import { Input } from "@/components/ui/input";

import { Label } from "@/components/ui/label";

import { Separator } from "@/components/ui/separator";

import { useAliasStore } from "@/lib/alias-store";

import { Settings, Plus, Trash2, Edit, Check, X } from "lucide-react";

import { useState } from "react";

export function SettingsModal() {

const { aliases, addAlias, removeAlias, editAlias } = useAliasStore();

const [newIntegration, setNewIntegration] = useState<string>("");

const [newName, setNewName] = useState<string>("");

const [newValue, setNewValue] = useState<string>("");

const [editingKey, setEditingKey] = useState<string | null>(null);

const [editName, setEditName] = useState<string>("");

const [editValue, setEditValue] = useState<string>("");

const handleAddAlias = () => {

if (!newIntegration.trim() || !newName.trim() || !newValue.trim()) return;

addAlias(newIntegration, { name: newName, value: newValue });

setNewIntegration("");

setNewName("");

setNewValue("");

};

const handleEditStart = (

integration: string,

alias: { name: string; value: string },

) => {

const editKey = `${integration}:${alias.name}`;

setEditingKey(editKey);

setEditName(alias.name);

setEditValue(alias.value);

};

const handleEditSave = (integration: string, oldName: string) => {

if (!editName.trim() || !editValue.trim()) return;

editAlias(integration, oldName, { name: editName, value: editValue });

setEditingKey(null);

setEditName("");

setEditValue("");

};

const handleEditCancel = () => {

setEditingKey(null);

setEditName("");

setEditValue("");

};

const activeIntegrations = Object.entries(aliases).filter(

([, aliasList]) => aliasList && aliasList.length > 0,

);

return (

<Dialog>

<DialogTrigger asChild>

<Button className="flex items-center gap-2" variant="outline">

<Settings className="size-4" />

Add Params

</Button>

</DialogTrigger>

<DialogContent className="sm:max-w-[650px] max-h-[80vh] overflow-y-auto">

<DialogHeader>

<DialogTitle>Integration Parameters</DialogTitle>

<DialogDescription>

Manage your integration parameters and aliases. Add new parameters

or remove existing ones.

</DialogDescription>

</DialogHeader>

<div className="space-y-6">

{activeIntegrations.length > 0 && (

<div className="space-y-4">

<h3 className="text-sm font-medium text-muted-foreground uppercase tracking-wide">

Current Parameters

</h3>

{activeIntegrations.map(([integration, aliasList]) => (

<div key={integration} className="space-y-3">

<div className="flex items-center gap-2">

<div className="size-2 rounded-full bg-blue-500" />

<h4 className="font-medium capitalize">{integration}</h4>

</div>

<div className="space-y-2 pl-4">

{aliasList.map((alias) => {

const editKey = `${integration}:${alias.name}`;

const isEditing = editingKey === editKey;

return (

<div

key={alias.name}

className="flex items-center gap-3 p-3 border rounded-lg bg-muted/30"

>

<div className="flex-1 grid grid-cols-2 gap-3">

<div>

<Label className="text-xs text-muted-foreground">

Alias Name

</Label>

{isEditing ? (

<Input

value={editName}

onChange={(e) => setEditName(e.target.value)}

className="font-mono text-sm mt-1 h-8"

/>

) : (

<div className="font-mono text-sm mt-1">

{alias.name}

</div>

)}

</div>

<div>

<Label className="text-xs text-muted-foreground">

Value

</Label>

{isEditing ? (

<Input

value={editValue}

onChange={(e) => setEditValue(e.target.value)}

className="font-mono text-sm mt-1 h-8"

/>

) : (

<div

className="font-mono text-sm mt-1 truncate"

title={alias.value}

>

{alias.value}

</div>

)}

</div>

</div>

<div className="flex gap-1">

{isEditing ? (

<>

<Button

variant="default"

size="icon"

className="size-8"

onClick={() =>

handleEditSave(integration, alias.name)

}

disabled={

!editName.trim() || !editValue.trim()

}

>

<Check className="size-3" />

</Button>

<Button

variant="outline"

size="icon"

className="size-8"

onClick={handleEditCancel}

>

<X className="size-3" />

</Button>

</>

) : (

<>

<Button

variant="outline"

size="icon"

className="size-8"

onClick={() =>

handleEditStart(integration, alias)

}

>

<Edit className="size-3" />

</Button>

<Button

variant="destructive"

size="icon"

className="size-8"

onClick={() =>

removeAlias(integration, alias.name)

}

>

<Trash2 className="size-3" />

</Button>

</>

)}

</div>

</div>

);

})}

</div>

</div>

))}

</div>

)}

{activeIntegrations.length > 0 && <Separator />}

<div className="space-y-4">

<h3 className="text-sm font-medium text-muted-foreground uppercase tracking-wide">

Add New Parameter

</h3>

<div className="space-y-4 p-4 border rounded-lg bg-muted/30">

<div className="space-y-2">

<Label htmlFor="integration">Integration Type</Label>

<Input

id="integration"

placeholder="e.g., discord, slack, github"

value={newIntegration}

onChange={(e) => setNewIntegration(e.target.value)}

/>

</div>

<div className="grid grid-cols-2 gap-4">

<div className="space-y-2">

<Label htmlFor="alias-name">Alias Name</Label>

<Input

id="alias-name"

placeholder="e.g., myTeamChannel"

value={newName}

onChange={(e) => setNewName(e.target.value)}

/>

</div>

<div className="space-y-2">

<Label htmlFor="alias-value">Value</Label>

<Input

id="alias-value"

placeholder="ID, URL, or other value"

value={newValue}

onChange={(e) => setNewValue(e.target.value)}

/>

</div>

</div>

<Button

onClick={handleAddAlias}

className="w-full"

disabled={

!newIntegration.trim() || !newName.trim() || !newValue.trim()

}

>

<Plus className="h-4 w-4 mr-2" />

Add Parameter

</Button>

</div>

</div>

</div>

<DialogFooter>

<DialogClose asChild>

<Button variant="outline">Close</Button>

</DialogClose>

</DialogFooter>

</DialogContent>

</Dialog>

);

}

太好了,模态框已经完成了,接下来我们来实现负责在用户界面中显示所有消息的组件。

chat-messages.tsx在目录中创建一个名为 `.txt` 的新文件components,并添加以下代码行:

// 👇 voice-chat-ai-configurable-agent/components/chat-messages.tsx

import { useEffect, useRef } from "react";

import { motion } from "framer-motion";

import { BotIcon, UserIcon } from "lucide-react";

import { Message } from "@/hooks/use-chat";

interface ChatMessagesProps {

messages: Message[];

isLoading: boolean;

}

export function ChatMessages({ messages, isLoading }: ChatMessagesProps) {

const messagesEndRef = useRef<HTMLDivElement>(null);

const scrollToBottom = () => {

messagesEndRef.current?.scrollIntoView({ behavior: "smooth" });

};

useEffect(scrollToBottom, [messages]);

if (messages.length === 0) {

return (

<div className="h-full flex items-center justify-center">

<motion.div

className="max-w-md mx-4 text-center"

initial={{ y: 10, opacity: 0 }}

animate={{ y: 0, opacity: 1 }}

>

<div className="p-8 flex flex-col items-center gap-4 text-zinc-500">

<BotIcon className="w-16 h-16" />

<h2 className="text-2xl font-semibold text-zinc-800">

How can I help you today?

</h2>

<p>

Use the microphone to speak or type your command below. You can

configure shortcuts for IDs and URLs in the{" "}

<span className="font-semibold text-zinc-600">settings</span>{" "}

menu.

</p>

</div>

</motion.div>

</div>

);

}

return (

<div className="flex flex-col gap-2 w-full items-center">

{messages.map((message) => (

<motion.div

key={message.id}

className="flex flex-row gap-4 px-4 w-full md:max-w-[640px] py-4"

initial={{ y: 10, opacity: 0 }}

animate={{ y: 0, opacity: 1 }}

>

<div className="size-[24px] flex flex-col justify-start items-center flex-shrink-0 text-zinc-500">

{message.role === "assistant" ? <BotIcon /> : <UserIcon />}

</div>

<div className="flex flex-col gap-1 w-full">

<div className="text-zinc-800 leading-relaxed">

{message.content}

</div>

</div>

</motion.div>

))}

{isLoading && (

<div className="flex flex-row gap-4 px-4 w-full md:max-w-[640px] py-4">

<div className="size-[24px] flex flex-col justify-center items-center flex-shrink-0 text-zinc-400">

<BotIcon />

</div>

<div className="flex items-center gap-2 text-zinc-500">

<span className="h-2 w-2 bg-current rounded-full animate-bounce [animation-delay:-0.3s]"></span>

<span className="h-2 w-2 bg-current rounded-full animate-bounce [animation-delay:-0.15s]"></span>

<span className="h-2 w-2 bg-current rounded-full animate-bounce"></span>

</div>

</div>

)}

<div ref={messagesEndRef} />

</div>

);

}

该组件将接收所有消息和 isLoading 属性,它所做的只是在 UI 中显示它们。

这段代码中唯一有趣的部分是messagesEndRef,我们用它来在添加新消息时滚动到消息底部。

太好了,既然消息显示已经设置好了,接下来就可以着手处理用户通过语音或聊天发送消息的输入部分了。

chat-input.tsx在目录中创建一个名为 `.txt` 的新文件components,并添加以下代码行:

// 👇 voice-chat-ai-configurable-agent/components/chat-input.tsx

import { FormEvent, useEffect, useState } from "react";

import { MicIcon, SendIcon, Square } from "lucide-react";

import { Input } from "@/components/ui/input";

import { Button } from "@/components/ui/button";

interface ChatInputProps {

onSubmit: (message: string) => void;

transcript: string;

listening: boolean;

isLoading: boolean;

browserSupportsSpeechRecognition: boolean;

onMicClick: () => void;

isPlaying: boolean;

onStopAudio: () => void;

}

export function ChatInput({

onSubmit,

transcript,

listening,

isLoading,

browserSupportsSpeechRecognition,

onMicClick,

isPlaying,

onStopAudio,

}: ChatInputProps) {

const [inputValue, setInputValue] = useState<string>("");

useEffect(() => {

setInputValue(transcript);

}, [transcript]);

const handleSubmit = (e: FormEvent<HTMLFormElement>) => {

e.preventDefault();

if (inputValue.trim()) {

onSubmit(inputValue);

setInputValue("");

}

};

return (

<footer className="fixed bottom-0 left-0 right-0 bg-white">

<div className="flex flex-col items-center pb-4">

<form

onSubmit={handleSubmit}

className="flex items-center w-full md:max-w-[640px] max-w-[calc(100dvw-32px)] bg-zinc-100 rounded-full px-4 py-2 my-2 border"

>

<Input

className="bg-transparent flex-grow outline-none text-zinc-800 placeholder-zinc-500 border-none focus-visible:ring-0 focus-visible:ring-offset-0"

placeholder={listening ? "Listening..." : "Send a message..."}

value={inputValue}

onChange={(e) => setInputValue(e.target.value)}

disabled={listening}

/>

<Button

type="button"

onClick={onMicClick}

size="icon"

variant="ghost"

className={`ml-2 size-9 rounded-full transition-all duration-200 ${

listening

? "bg-red-500 hover:bg-red-600 text-white shadow-lg scale-105"

: "bg-zinc-200 hover:bg-zinc-300 text-zinc-700 hover:scale-105"

}`}

aria-label={listening ? "Stop Listening" : "Start Listening"}

disabled={!browserSupportsSpeechRecognition}

>

<MicIcon size={18} />

</Button>

{isPlaying && (

<Button

type="button"

onClick={onStopAudio}

size="icon"

variant="ghost"

className="ml-2 size-9 rounded-full transition-all duration-200 bg-orange-500 hover:bg-orange-600 text-white shadow-lg hover:scale-105"

aria-label="Stop Audio"

>

<Square size={18} />

</Button>

)}

<Button

type="submit"

size="icon"

variant="ghost"

className={`ml-2 size-9 rounded-full transition-all duration-200 ${

inputValue.trim() && !isLoading

? "bg-blue-500 hover:bg-blue-600 text-white shadow-lg hover:scale-105"

: "bg-zinc-200 text-zinc-400 cursor-not-allowed"

}`}

disabled={isLoading || !inputValue.trim()}

>

<SendIcon size={18} />

</Button>

</form>

<p className="text-xs text-zinc-400">

Made with 🤍 by Shrijal Acharya @shricodev

</p>

</div>

</footer>

);

}

这个组件与道具有些关联,但主要与语音输入有关。

该组件的唯一功能就是调用handleSubmit通过 prop 传递的函数。

我们之所以将该browserSupportsSpeechRecognition属性传递给组件,是因为许多浏览器(包括 Firefox)仍然不支持 Web Speech API。在这种情况下,用户只能通过聊天与机器人交互。

既然我们编写的是可重用性很强的代码,那我们就把头部也写在一个单独的组件里吧,何乐而不为呢?

chat-header.tsx在目录中创建一个名为 `.txt` 的新文件components,并添加以下代码行:

// 👇 voice-chat-ai-configurable-agent/components/chat-header.tsx

import { SettingsModal } from "@/components/settings-modal";

export function ChatHeader() {

return (

<header className="fixed top-0 left-0 right-0 z-10 flex justify-between items-center p-4 border-b bg-white/80 backdrop-blur-md">

<h1 className="text-xl font-semibold text-zinc-900">Voice AI Agent</h1>

<SettingsModal />

</header>

);

}

这个组件非常简单;它只是一个带有标题和设置按钮的头部。

好,现在让我们把所有这些 UI 组件放在一个单独的组件中,我们将在 中显示它page.tsx,这样项目就完成了。

chat-interface.tsx在目录中创建一个名为 `.txt` 的新文件components,并添加以下代码行:

// 👇 voice-chat-ai-configurable-agent/components/chat-interface.tsx

"use client";

import { useCallback } from "react";

import { useMounted } from "@/hooks/use-mounted";

import { useChat } from "@/hooks/use-chat";

import { useAudio } from "@/hooks/use-audio";

import { useSpeechRecognitionWithDebounce } from "@/hooks/use-speech-recognition";

import { ChatHeader } from "@/components/chat-header";

import { ChatMessages } from "@/components/chat-messages";

import { ChatInput } from "@/components/chat-input";

export function ChatInterface() {

const hasMounted = useMounted();

const { messages, isLoading, sendMessage } = useChat();

const { playAudio, stopAudio, isPlaying } = useAudio();

const handleProcessMessage = useCallback(

async (text: string) => {

const botMessage = await sendMessage(text);

if (botMessage) await playAudio(botMessage.content);

},

[sendMessage, playAudio],

);

const {

transcript,

listening,

resetTranscript,

browserSupportsSpeechRecognition,

startListening,

stopListening,

} = useSpeechRecognitionWithDebounce({

onTranscriptComplete: handleProcessMessage,

});

const handleMicClick = () => {

if (listening) {

stopListening();

} else {

startListening();

}

};

const handleInputSubmit = async (message: string) => {

resetTranscript();

await handleProcessMessage(message);

};

if (!hasMounted) return null;

if (!browserSupportsSpeechRecognition) {

return (

<div className="flex flex-col h-dvh bg-white font-sans">

<ChatHeader />

<main className="flex-1 overflow-y-auto pt-20 pb-28">

<div className="h-full flex items-center justify-center">

<div className="max-w-md mx-4 text-center">

<div className="p-8 flex flex-col items-center gap-4 text-zinc-500">

<p className="text-red-500">

Sorry, your browser does not support speech recognition.

</p>

</div>

</div>

</div>

</main>

<ChatInput

onSubmit={handleInputSubmit}

transcript=""

listening={false}

isLoading={isLoading}

browserSupportsSpeechRecognition={false}

onMicClick={handleMicClick}

isPlaying={isPlaying}

onStopAudio={stopAudio}

/>

</div>

);

}

return (

<div className="flex flex-col h-dvh bg-white font-sans">

<ChatHeader />

<main className="flex-1 overflow-y-auto pt-20 pb-28">

<ChatMessages messages={messages} isLoading={isLoading} />

</main>

<ChatInput

onSubmit={handleInputSubmit}

transcript={transcript}

listening={listening}

isLoading={isLoading}

browserSupportsSpeechRecognition={browserSupportsSpeechRecognition}

onMicClick={handleMicClick}

isPlaying={isPlaying}

onStopAudio={stopAudio}

/>

</div>

);

}

这很简单。首先,我们需要检查组件是否已挂载,因为所有这些操作都应该在客户端运行,正如您所知,这些都是浏览器特定的 API。然后,我们从组件中提取所有字段useSpeechRecognitionWithDebounce,并根据浏览器是否支持识别来显示相应的 UI。

转录完成后,我们将消息文本发送到该handleProcessMessage函数,该函数进而调用另一个sendMessage函数,如您所知,该函数会将消息发送到我们的/api/chatAPI 端点。

最后,更新根目录下的 page.tsx 文件以显示该ChatInterface组件。

// 👇 voice-chat-ai-configurable-agent/src/app/page.tsx

import { ChatInterface } from "@/components/chat-interface";

export default function Home() {

return <ChatInterface />;

}

至此,我们的整个申请流程就完成了!🎉

顺便一提,我还开发了另一个类似的基于 MCP 的聊天应用,它可以连接远程 MCP 服务器,甚至可以连接本地 MCP 服务器(无论服务器类型如何)!如果感兴趣,可以看看:👇

🎉 使用 Next.js 构建您自己的聊天 MCP 客户端⚡

Shrijal Acharya for Composio ・ 5 月 12 日

结论

哇,这可真是费了不少功夫,不过绝对值得!想象一下,你只需动动嘴就能控制所有应用,是不是很酷?😎

说实话,它完全可以用于你的日常工作流程,我建议你也这样做。

搭建这个真的很有趣,对吧?👀

您可以在这里找到完整的源代码。

文章来源:https://dev.to/composiodev/build-your-own-personal-voice-ai-agent-to-control-all-your-apps-2dfa请在下方评论区分享您的想法!👇